Playing the white pieces, the banks led with the regulatory capture opening. In a sequence of expertly coordinated moves, they progressively took control of the chessboard. That should have led to an easy win, but a few careless moves handed positional advantage to the regulators' black pieces. With their position weakened, the banks subsequently lost their queen. The regulators continued to press their positional and material advantage until checkmate was inevitable.

As defined in Mattli and Woods' The Politics of Global Regulation, regulatory capture is the “de facto control of the state and its regulatory agencies by the 'regulated' interests, enabling these interests to transfer wealth to themselves at the expense of society.”

Playing the regulatory capture opening isn't without its risks; it is prone to creating short-term advantages that are rarely sustainable over the long term.

Calculating Capital

When the Basel Committee on Banking Supervision first mooted the setting aside of protective capital for operational risks in the late 1990s, large international banks lobbied for their in-house models to provide the basis for calculating the new regulatory capital charge. The Basel Committee acquiesced and, in so doing, handed the initiative for their development to the elite banks.

Basel II attached an Advanced Measurement Approach (AMA) tag to the in-house models. A thin principles-based framework was also offered in the expectation that a robust AMA framework, with comparable bank-to-bank outputs, would emerge over time. But that never happened. Once each bank had control of the method of its own regulatory capital calculation, it held onto it. Like the queen on the chessboard, banks could move the calculation around to achieve the best positional advantage.

The banks' slickness in taking control of the regulatory capital agenda was not replicated in the operating space, where events exposed their susceptibility to carelessness on a grand scale. From the mid-1990s, the banking sector suffered a series of costly scandals that included the misguided or fraudulent activities of rogue traders, payment protection insurance (PPI) in the U.K., and the subprime fiasco that became the backdrop to the global financial crisis of 2007-'08.

Regulators Strike Back

The regulatory response, in the form of Basel III, was swift and severe. It was the metaphorical removal of the banks' pieces from the chessboard as the regulatory capital requirement was ramped up to eyewatering levels, and incremental control mechanisms were introduced that restricted banks' risk-taking and freedom to operate. The banks then suffered the ultimate humiliation when the regulators removed their “AMA queen.”

Checkmate came in the form of the Basel Committee's Standardized Approach (SA). In effect, the SA determines the amount of OpRisk capital to be set aside by each bank using financial proxies in place of banks' own internal risk management systems.

The SA is calibrated to produce an oversized capital buffer to ensure the ongoing safety and stability of the banking sector. For the banks, it means holding excessive amounts of costly, inert and unproductive OpRisk capital.

The Rematch

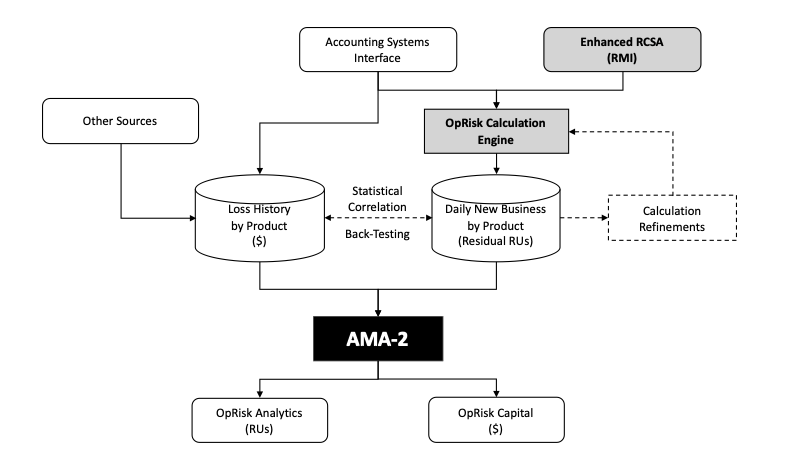

After such a humiliating defeat, banks will need to be well prepared when they return to the regulatory chessboard for a rematch. The most critical aspect is the opening they will play. In the diagram below is a suggested next-generation AMA-2 approach that has the potential to overcome the regulators' SA defense. It embraces two new modules that use the RU - a new, additive metric designed to expresses all forms of exposure to OpRisk - to create an integrated AMA-2 framework that combines stochastic modelling with accounting, operating, risk and loss data:

The first is an Enhanced Risk & Control Self-Assessment (E-RCSA) that replaces traffic-light, or “RAG,” assessment metrics with expertly calibrated numeric weights and risk factors. This enables the calculation of a risk mitigation index (RMI) for each business component that completes an assessment - a measure, on a scale of zero to 100, of risk mitigation effectiveness.

The second is an OpRisk Calculation Engine that interfaces with accounting, operating and E-RCSA systems to produce daily calculations of maximum OpRisk exposures (Inherent RUs) by product that are combined with RMIs to produce actual OpRisk exposures (Residual RUs).

AMA-2 produces daily OpRisk analytics in RUs by business component (cost center), product, customer, and location with the facility to monitor accumulating exposures to risk against pre-approved risk limits also set in RUs. AMA-2's analytics in RUs are directly comparable across the vertical and horizontal dimensions of the enterprise.

Combining exposure-to-risk in Residual RUs and loss history within AMA-2's stochastic modelling creates the potential, over time, to achieve high-precision loss predictions and capital calculations through dynamic statistical correlation and back-testing.

Where Next?

The success of initial tests of the RU described in the GARP article Operational Risk Metrics - Seeing is Believing leads researchers to believe that the AMA-2 opening is capable of overcoming the regulators' SA defense. However, banks will need to build confidence in the new opening before agreeing to a rematch.

In that regard, there's still a lot of work that needs to be done.

Peter Hughes (phughes@rasb.org) is chairman of the Risk Accounting Standards Board. He is also a visiting fellow and advisory board member of the Durham University Business School's Centre for Banking, Institutions and Development (CBID), where he leads research into risk-based accounting systems. He was formerly a banker with JPMorgan Chase.