For centuries, physicists have tried to arrive at a “theory of everything,” or one grand cosmic principle that explains the mechanics of the universe. Einstein came close with his theory of relativity which spoke of gravity as the unifying property of space and time. Quantum physics went deeper, seeking to understand the universe at an atomic and sub‐atomic level. Then came string theory, looking to solve the problem of quantum gravity.

While the quest for a unifying theory continues to prove elusive, it does bear some similarities to the evolution of operational resilience. Just as physicists have tried to explain the universe through various theories, regulators have tried to address risks, data quality, resolution and resilience issues by issuing a range of rules, standards and frameworks, such as BCBS 239, CBEST and resolution/recovery planning requirements.

But in mid‐2018 - for the first time - the UK Prudential Regulatory Authority (PRA), the Financial Conduct Authority (FCA) and the Bank of England (BoE) joined forces to issue a discussion paper on a unifying approach to operational resilience and its supervision in the financial services sector. Their recommendations built on earlier regulatory guidance to emphasize the importance of implementing systems, plans, and processes that will allow financial institutions to absorb and adapt to disruptions, rather than contribute to them.

Nine months later, the FCA published its business plan for 2019-20, which lists operational resilience as a top priority. Clearly, the bar has been raised in terms of the operational standards that financial institutions will be expected to meet going forward.

Why Resilience Matters

Around the globe, operational risk events are on the rise. If it's not a cyber incident - like the Equifax data breach that impacted about 148 million customers - it's an IT meltdown-like the TSB Bank systems failure that cost the organization £330m ($422.33 million) and 80,000 customers. Or it's a money-laundering scandal, like the one at Danske Bank that continues to ripple out across Europe's banking industry.

Therein lies the rub. Operational disruptions have not abated and are no longer isolated events, but, rather, potentially systemic issues with real economic, safety and security effects.

What's more, each operational risk event has the power to amplify other risks. A cyber‐attack doesn't just impact security or critical infrastructure. It also affects market capitalization, earnings, reputation and integrity. What, then, can financial institutions do to build their operational resilience, and safeguard the stability of the financial system?

Here are a few key steps:

- Target the right things. Define the organization's key business services or critical economic functions; set internal and external impact tolerances; and understand internal and external dependencies.

- Build resilience. Perform scenario analyses and develop a communication plan and stakeholder map.

- Practice responses. Provide regulators access to test data.

Let's now take a more in‐depth look at each of these objectives.

Targeting the Right Things

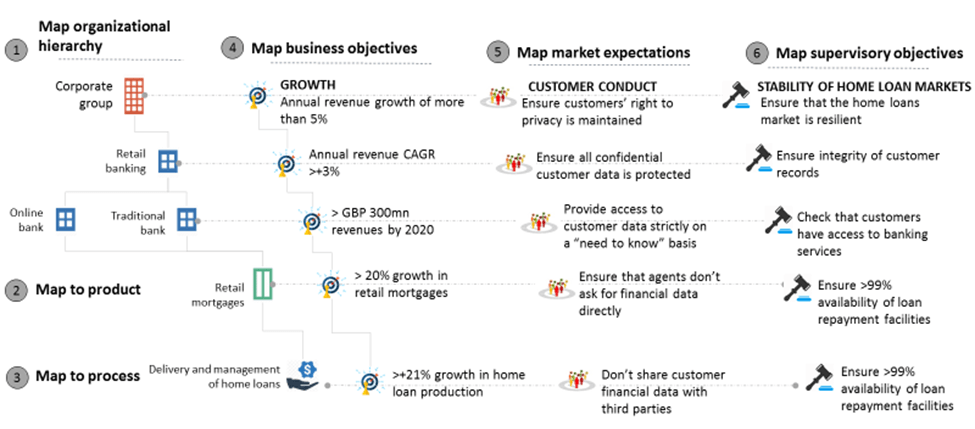

The first step in building an operational resilience framework is to identify the most important business services in the organization. That starts with creating a relational data framework where organizational hierarchies are aligned to business services/processes, business objectives, market expectations and regulatory objectives.

For example, in the figure below, a financial institution's retail banking division can be aligned to their retail mortgage loans service. The business objective would be to achieve a 20% or higher growth in mortgage loans. Meanwhile, the market expectation would be to protect customer financial data, which, in turn, maps to the supervisory objective of ensuring the integrity of customer records.

Figure 1: Building a Relational Data Framework

Once the relational data framework has been mapped, we can use a scorecard approach to measure the importance of each business service in terms of its financial viability, impact on customer/ market participation and impact on financial stability. The scores can then be compared with each other to arrive at a final list of the most important business services.

The next step is to set impact tolerances that quantify the amount of disruption that an organization is prepared to bear in the event of an incident. These tolerances can be defined in the context of specific metrics, as well as disruptive outcomes - e.g., threats to business viability, harm to consumers/markets and instability in the financial system.

As an example, let's look at home loans. Assume that two days is the maximum acceptable time for an organization to process new loan applications pending approval. That would be the impact tolerance level. If this threshold is breached, business viability could be threatened. To test if the organization is well within its threshold levels, one can measure the value of total pending loan payments, as well as the value of total loan applications pending.

Similarly, let's assume that the maximum number of exceptions raised for the manual due diligence of customer financial records shouldn't exceed 0.1% of all new loan applications. If it does, it could harm consumers and markets. To test adherence to this tolerance level, one could measure the volume of customer and prospect accounts.

Setting and measuring impact tolerances in this way enables financial institutions to establish an operational resilience program that focuses on the right things, while prioritizing resources and enabling better‐informed business decisions.

The next step is to understand upstream and downstream dependencies. This goes back to the relational data framework we talked about earlier- except that we're now digging deeper and integrating a wider range of elements.

If the earlier process mapping went as far as the delivery and management of home loans, we're now drilling down to sub‐processes such as sales, underwriting, payment and valuation. We're also mapping related systems (e.g., CRM, core banking and faster payments), assets (e.g., customer data), internal SLAs and third parties (e.g., loan due diligence vendors, system integrators and customer service captive units).

The idea is to create one unified data model that links together all the touch points impacting the delivery of products and services. This sets a foundation on which organizations can pinpoint potential risks, vulnerabilities, issues, incidents and failures that could impact their operational resilience.

Building Resilience

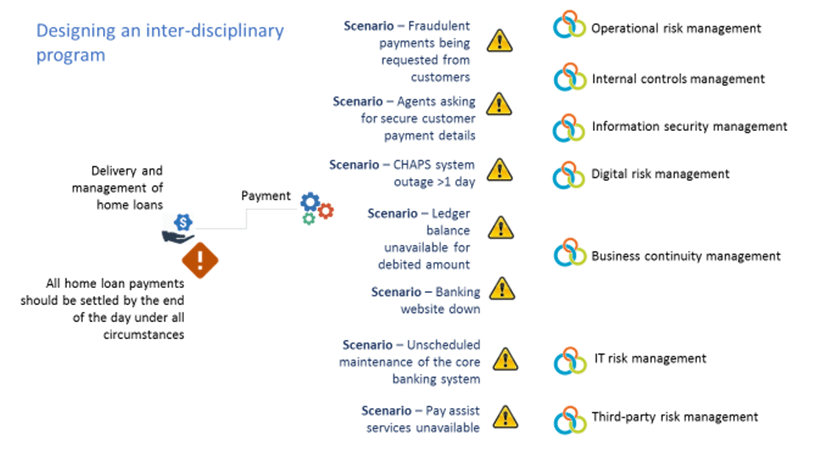

Once the data framework and impact tolerances have been defined, the next step is to create scenarios that identify potential points of failure. At this point, it's important to collaborate with various specialists across the organization - be they operational risk managers, internal controls experts, IT security officers, third-party teams or business continuity functions. Only though an inter‐disciplinary effort can organizations gain a truly comprehensive understanding of the risk scenarios that could disrupt their business.

As an example, below is a list of potential scenarios related to the delivery and management of home loans:

Figure 2: Creating Risk Scenarios

After we create the scenarios, we overlay them on the relational data framework to understand how they impact the business at various levels, including a people level, asset level, process level, system level and third‐party level. For instance, if the banking website goes down, it could impact system dependencies, including mobile apps and core banking systems. Similarly, if the ledger balance is unavailable for a debited amount, it could impact critical assets such as bank ledgers, and customer data.

Once these dependencies have been understood, we can go further and identify the potential gaps in individual programs that could amplify the impact of a particular risk scenario. This exercise also considers the points of interaction between various risk disciplines, such as IT risk management and internal controls management.

Let's assume the scenario of a customer being unable to complete a home loan payment due to operational issues with the payment process. That becomes an issue for the operational risk management function.

The potential gaps or points of failure that could compound this scenario include the following:

- Third‐party management doesn't have a risk prevention strategy that includes proactive system maintenance schedules;

- Business continuity management doesn't have a redundancy strategy that includes backup servers and a continuity plan for the banking portal;

- IT risk management doesn't have a fallback strategy to re-route payments through mobile apps instead of the online portal; and

- Internal control management doesn't ensure that there are measures in place for all payments initiated through CHAPS to be cleared by the end of the day.

Once these gaps have been identified, they can be addressed proactively.

The next step is to rank the risk scenarios based on their impact on organizational viability, customer/ market participation and financial stability. Thereafter, a risk mitigation or action plan can be defined. A typical action plan would include steps to assess internal capital adequacy, prioritize the recovery of critical scenarios, design a business continuity plan and update governance frameworks and controls.

As part of organizational change management, a communication plan should be established - one that maps internal and external stakeholders in one framework to demonstrate lines of responsibility and accountability during a risk scenario.

For instance, if online payment systems are down, the system administrator should know that he is responsible for communicating with system architects to re‐route payments through faster payments systems. He should also be aware that he is required to keep the loan officer informed. The loan officer, in turn, would need to communicate the issue to other banks, customers and the treasury.

Through this streamlined and practiced workflow, the impact of a disruption can be swiftly contained.

Practicing Responses

A risk mitigation plan by itself isn't enough. Regular testing of the plan is also essential in ensuring its effectiveness. These tests can take the form of scenario-based questionnaires, simulations, expert table-top exercises and thematic reviews.

Consider the scenario of unscheduled core banking system maintenance. As a test exercise, the relevant stakeholders might be given a survey to provide evidence that important business services have been identified, that impact tolerance levels have been defined, and that an operational resilience plan is in place to manage the disruption.

Simulation exercises might also be conducted to check the efficacy of existing communication plans. Table-top exercises, meanwhile, would demonstrate the organization's scenario planning and capital planning effectiveness.

Lastly, a thematic review of the mitigation program would use feedback loops, as well as predictive and prescriptive analytics, to identify gaps or areas of concern that need to be addressed on priority.

Gearing Up

On April 10, scientists unveiled the first image of a black hole, thereby validating decades of theoretical science and confirming Einstein's predictions. Still, many questions persist. How do general relativity and quantum mechanics fit together? Is string theory correct? What is the fate of the universe?

The publication of the PRA/FCA/BoE discussion paper on operational resilience also raises some pertinent questions. For instance, could evidence of better operational resilience measures lead to a decrease in regulatory-mandated capital buffers? Moreover, could a robust approach to operational resilience provide a better understanding of the impact of resolution than a “living will”?

We don't know the answers to these questions yet, but there is no doubt that a strong resilience program will be key in strengthening credibility and trust with both regulators and customers, while sustaining business performance through disruptions. What remains to be seen is how effectively financial institutions rise to the challenge.

Brenda Boultwood is the senior vice president of industry solutions at MetricStream. She is responsible for a portfolio of key industry verticals, including energy and utilities, federal agencies, strategic banking and financial services. Prior to joining MetricStream, she served as senior vice president and chief risk officer at Constellation Energy. Before that, she worked as the global head of strategy, Alternative Investment Services, at J.P. Morgan Chase, where she developed the strategy for the company's hedge fund services, private equity fund services, leveraged loan services and global derivative services. She has also been a board member of the Global Association of Risk Professionals (GARP), and currently serves on the board of the Committee of Chief Risk Officers (CCRO).