Risk Insights

Your dedicated resource for the latest articles, podcasts, webcasts, reports, and more.

As Private Markets Boomed, So Did Initiatives to Close Data Gaps

April 10

Acquisitions, partnerships and new ventures are “institutionalizing” infrastructure.

Regulatory Reporting: The Need for Interoperability

April 10

If achieved by a data-point-based, rather than a report-based, reporting method, interoperability ca...

A Smarter Path to Net Zero: Combining Financial Engineering With Energy Policy Innovation

April 7

Climate-linked convertible bonds can be beneficial for investors, power generators, and consumers by...

To Be a Great Risk Manager, Think Tragically

April 2

The modern Chief Risk Officer must think tragically to avoid tragedy, navigating the productive tens...

After 13 Years, Compliance with the BCBS Risk Data Principles Is Incomplete

April 2

The Basel Committee acknowledges challenges and “remains committed” to helping address them. The Eur...

Don't Miss an Insight

We've made it easy to stay informed. Sign up now and get the latest in risk thought leadership delivered straight to your inbox each week.

Stress Testing: Current Issues, Regulatory Analysis, and a Sneak Peek at the Future

Hear from Cristian deRitis, deputy chief economist at Moody’s Analytics, on the stress testing impac...

Stress Testing: Current Issues, Regulatory Analysis, and a Sneak Peek at the Future

Hear from Cristian deRitis, deputy chief economist at Moody’s Analytics, on the stress testing impac...

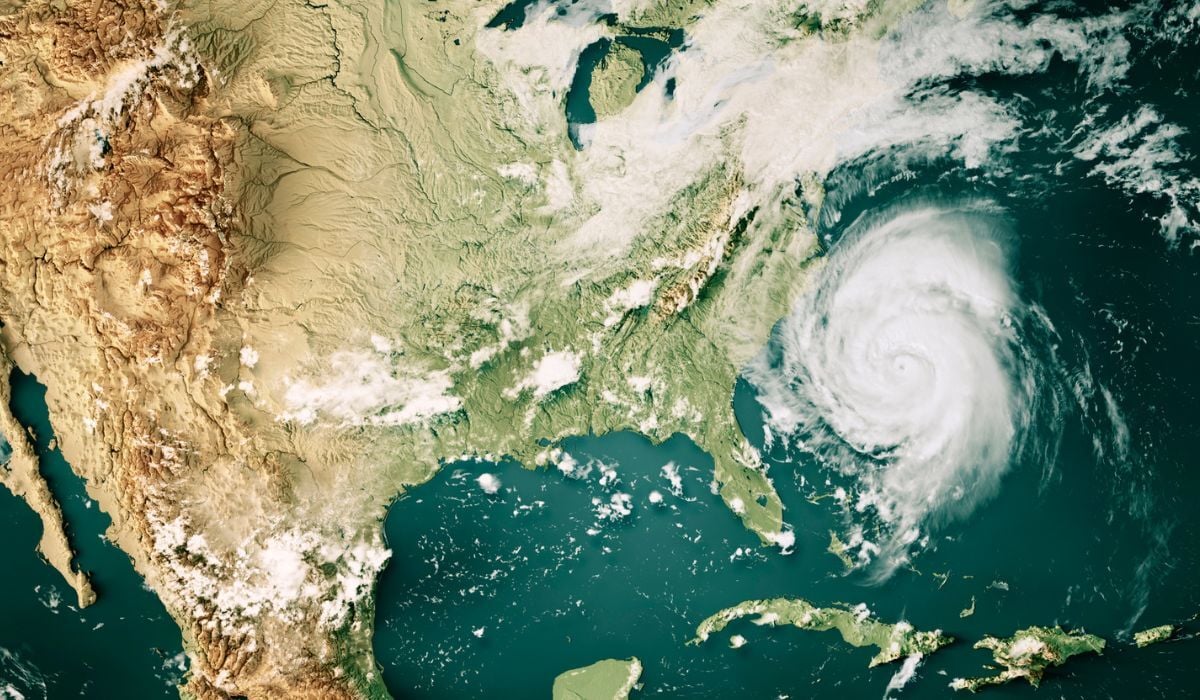

A Risk Professional’s Guide to Physical Risk Assessments

Climate change is driving an increase in the frequency and severity of physical risk events, such as...

Featured Columns

Featured Columns

Cracks in the Foundation? Five Structural Risks for 2026.

By Cristian deRitis | January 16, 2026

Read Article

Chapter Meeting

Front-to-Back Liquidity: Building Resilience Across the Lines

April 16, 2026 | 5:30 PM

In-Person | SMBC, 277 Park Ave, New York, NY 10172

Don't Miss an Insight

We've made it easy to stay informed. Sign up now and get the latest in risk thought leadership delivered straight to your inbox each week.