Risk Insights

Your dedicated resource for the latest articles, podcasts, webcasts, reports, and more.

The Geopolitical Root Cause: When Macro Shocks Become Idiosyncratic Realities

June 5

We spent 30 years architecting the “optimized” enterprise on the assumption of perpetual commercial ...

Meeting the Moment with Multi-Scenario Planning

June 5

Understanding “multiple plausible futures” is seen as increasingly imperative. Adding “resilience ar...

Faster Visibility versus Firmer Forecasts: A Commodity Risk Management Perspective

June 5

Amid uncertainties stemming from successive market shocks, the strategic questions are familiar. New...

The Lottery Ticket Economy

May 29

With implications extending well beyond consumer protection, “this behavioral shift is affecting cre...

Investor Climate Action: What Finance Can and Can’t Achieve

May 28

Hear from Prof. Tom Gosling, Director of the Initiative in Sustainable Finance at the London School ...

Don't Miss an Insight

We've made it easy to stay informed. Sign up now and get the latest in risk thought leadership delivered straight to your inbox each week.

Stress Testing: Current Issues, Regulatory Analysis, and a Sneak Peek at the Future

Hear from Cristian deRitis, deputy chief economist at Moody’s Analytics, on the stress testing impac...

Inside the Mind of a Buy-Side CRO

Hear from Peter Mortensen, the chief risk officer of Russell Investments, about inflation volatility...

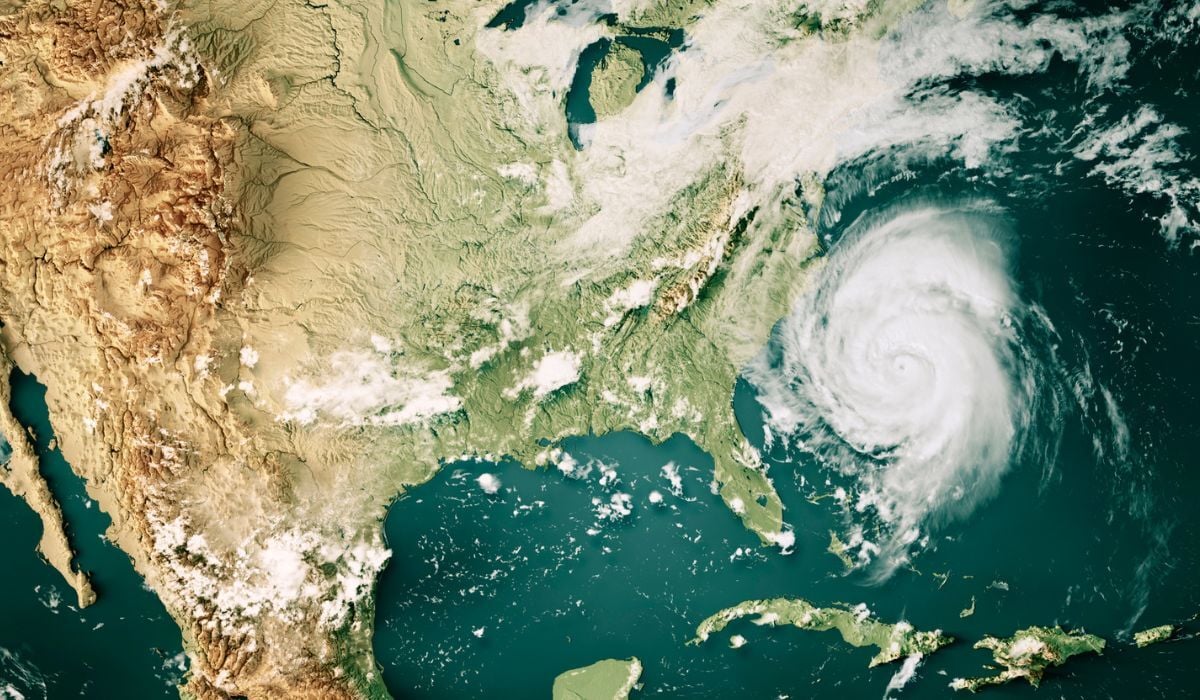

A Risk Professional’s Guide to Physical Risk Assessments

Climate change is driving an increase in the frequency and severity of physical risk events, such as...

Featured Columns

Featured Columns

The Geopolitical Root Cause: When Macro Shocks Become Idiosyncratic Realities

By Brenda Boultwood | June 5, 2026

Read Article

From Imagination to Exploration: Scenario Design in a Nonlinear World

By Alla Gil | April 24, 2026

Read Article

Don't Miss an Insight

We've made it easy to stay informed. Sign up now and get the latest in risk thought leadership delivered straight to your inbox each week.